How Draft One upholds transparency for AI-assisted police reports

How Draft One upholds transparency for AI-assisted police reports

Reporting takes time—up to 15 hours a week for the average U.S. officer, based on Axon’s research. Draft One, launched in early 2024, generates initial police report narratives using transcripts from body-worn cameras, helping officers reclaim time for patrol, investigations, and community engagement. Once an initial draft is created with Draft One, there is a workflow for the officer to review the narrative and make any necessary edits or corrections.

When developing AI for public safety, transparency and accountability are essential. That's why Axon is committed to responsible innovation in everything we build. With Draft One, that commitment comes to life through built-in transparency features, including the critical role of keeping a human in the loop. Draft One is built with safeguards to make its use visible at every stage, creating multiple layers of accountability so that law enforcement agencies, officers, and the public can understand when and how AI assistance is being used to help write reports.

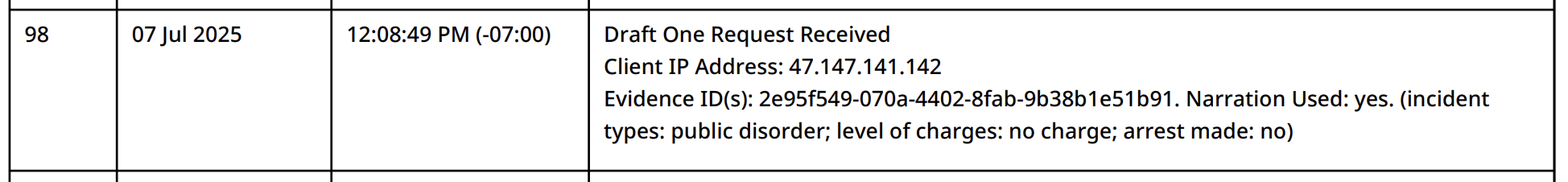

Permanent digital records

Every time Draft One generates a narrative, that action is recorded in an unalterable digital audit trail. This system captures who used the tool, when they used it, and what evidence was involved. The audit trail even tracks when officers sign required liability disclosures, and can detect attempts to bypass organizational safeguards. The result is a permanent record that provides important transparency for evidence handling and discovery requirements, serving as a definitive account of the chain of custody and establishing the authenticity and reliability of digital evidence.

This means that an agency can review a report and confirm whether Draft One was used. This can especially be helpful if a case involves shared evidence, as Draft One usage will be visible within the digital audit trail—helping ensure transparency across the chain of justice.

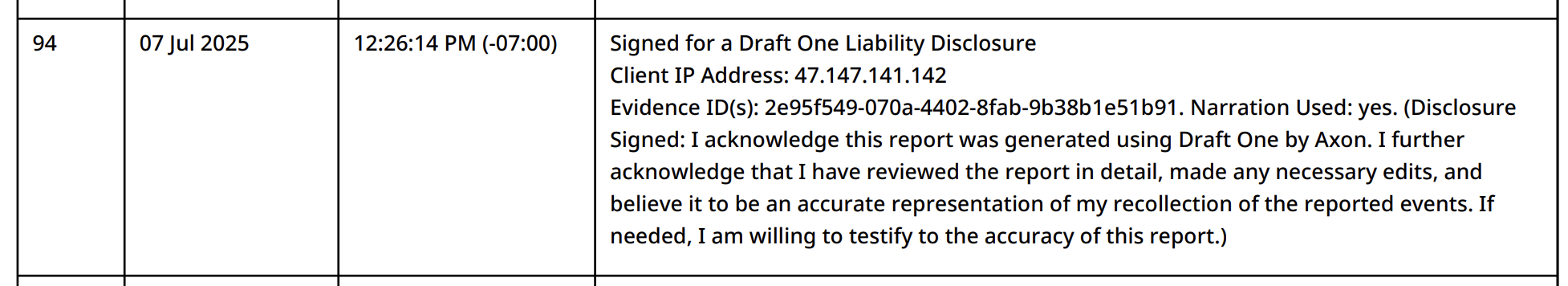

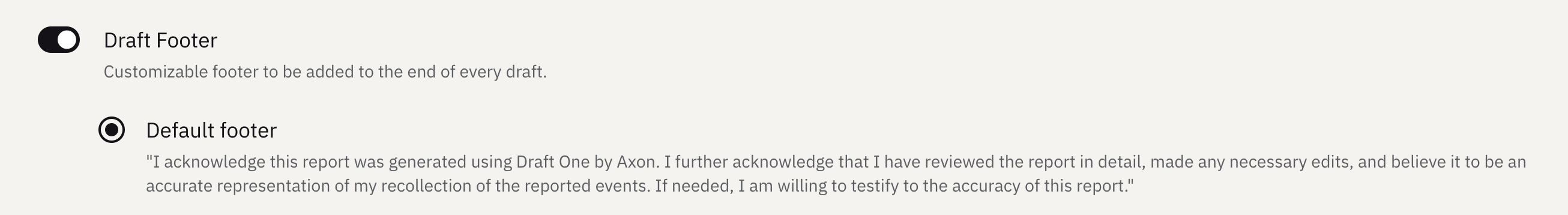

Default disclosure and officer sign-off in every report

By default, Draft One includes a customizable disclosure that indicates the report was developed using AI. Agencies can configure this statement to appear at the beginning or end of a report to clearly document AI assistance.

Accountability remains with the officer. While Draft One creates the initial draft, every report must be edited, reviewed and approved by a human. Officers are required to sign off on the report's accuracy before it moves to the next round of human review:

Accountability remains with the officer. While Draft One creates the initial draft, every report must be edited, reviewed and approved by a human. Officers are required to sign off on the report's accuracy before it moves to the next round of human review:

“I acknowledge this report was generated using Draft One by Axon. I further acknowledge that I have reviewed the report in detail, made any necessary edits, and believe it to be an accurate representation of my recollection of the reported events. If needed, I am willing to testify to the accuracy of the report.”

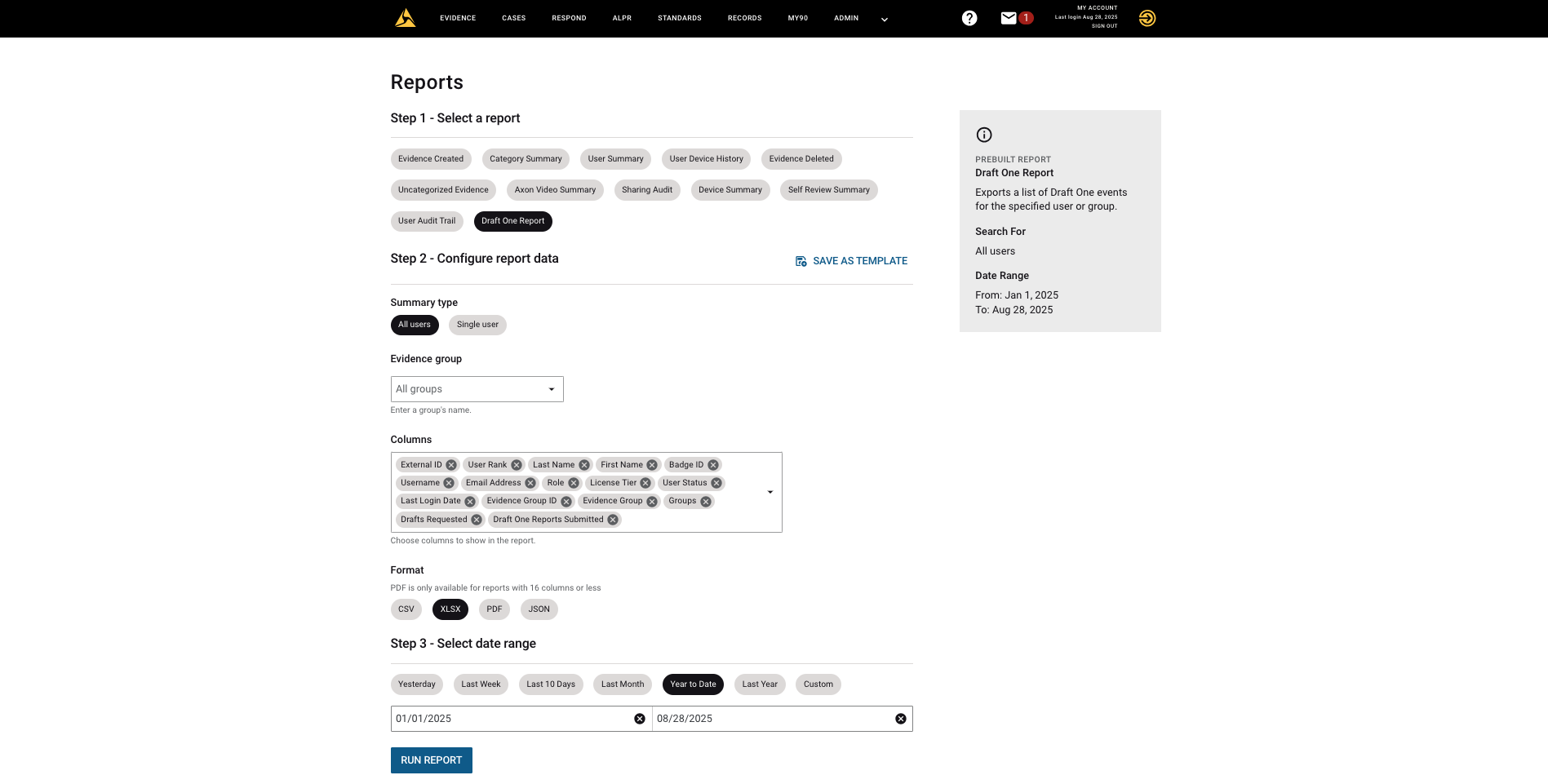

Enhanced oversight tools

To provide greater visibility, Axon offers self-service usage reports for Draft One. These show how many drafts have been generated and submitted per user, along with the specific evidence items used. Agencies can conduct their own oversight and analysis without external support.

Shaping the future

Shaping the future

While transparency is a cornerstone of Draft One, it’s one element of a broader set of safeguards grounded in Axon’s responsible innovation framework. These protections help ensure that officers—not technology—remain the decision-makers in key moments and defaults are set to limit initial use. Draft One requires human editing with placeholders and, by default, is limited to minor incident types, helping agencies gain experience with lower-severity reports first. Agencies also have full control over what officers within their agency use Draft One and for what types of reports.

AI can help make policing safer and more effective, but only when applied with transparency, accountability and human oversight. Draft One enhances officer capabilities while maintaining the visibility and control that policing requires. As we continue developing AI tools for law enforcement, we’re guided by our principles of responsible innovation. We’re also committed to working with prosecutors, defense attorneys, community advocates and other stakeholders to guide the responsible evolution of Draft One and other AI technologies, including changes as laws evolve.

:format(webp))

:format(webp))

:format(webp))